AuroraGPT

Sam Foreman 2025-07-31

- 🎯 AuroraGPT: Goals

- 🧪 AuroraGPT: Open Science Foundation Model

- 🧰 AuroraGPT: Toolbox

- 🌌 Aurora

- 🤝 Teams

- 🎯 Goals

- 🏋️ Challenges: In Practice

- 💾 Training: 2T Tokens

- 🍹 Blending Data, Efficiently

- 📉 Loss Curve: Training AuroraGPT-7B on 2T Tokens

- ✨ Features

- ✨ Features (even more!)

- 🤔 Evaluating Models on Scientific Applications

- ⚖️ Evaluating FM Skills for Science: Criteria

- 🧬 MProt-DPO: Scaling Results

- 🧬 MProt-DPO: Scaling Results

- 🚂 Loooooooooong Sequence Lengths

- 📓 References

- ❤️ Thank you!

- 📑 Bibliography

🎯 AuroraGPT: Goals

AuroraGPT: General purpose scientific LLM Broadly trained on a general corpora plus scientific papers, texts, data

- Explore pathways towards a “Scientific Assistant” model

- Build with international partners (RIKEN, BSC, others)

- Multilingual English, 日本語, French, German, Spanish

- Multimodal: images, tables, equations, proofs, time series, graphs, fields, sequences, etc

Figure 1: Image from Hannibal046 /

Awesome-LLM

-

Here to talk about AuroraGPT, Argonne’s internal effort to build a general purpose scientific LLM, broadly trained on a general corpora of text + scientific {papers, text, data}

-

As part of this effort, we plan to…

- Explore pathways, build with international partners, multi-{lingual, modal}

-

Rough timeline of the project and deliverables:

- 202{3,4}: text-only models, plan to release a series of {7B, 70B, 1T} models

- 202{4,5}: Basic multi-modal models

- 202{5,6}: Advanced scientific multimodal models

-

AuroraGPT: Exascale Pre-Training of Large Language Models on Diverse Accelerators > argonne-lcf/Megatron-DeepSpeed > Large Model Training: any scale, any accelerator

-

Thoughts:

- yeah okay so I’ll probably try and include then like:

- {tensor, pipeline, sequence}-parallelism

- DeepSpeed integration (ZeRO offloading, activation checkpointing, …)

- Robust mechanisms for automatic experiment {configuration, tracking, …}

- Support for modern (and experimental!) optimizers

- Large batch training

- yeah okay so I’ll probably try and include then like:

-

Goals

-

Issues with existing models

-

AuroraGPT

- Project Details

- Teams, Ongoing Efforts

- Scientific Evaluations

-

Scaling Results

- MProt-DPO

aeris(??)

🧪 AuroraGPT: Open Science Foundation Model

Figure 2: High-level overview of AuroraGPT project

- AuroraGPT will be a publicly distributed, open source foundation model for open science

- Is being trained on:

- Scientific / engineering structured data

- General text, media, news, etc.

- Large amounts of low to medium quality data

- Much less high quality data (that is publicly available for use)

- This data is then cleaned, processed, de-duplicated and used for the initial pre-training phase of the model

- The vast majority of the overall compute is spent during this initial

pre-training phase

- This is the group I help to lead and will be talking a bit about today

- The initial pre-training phase is currently underway

- Eventually, given a bit of time, effort and magic, the model will be ready for fine-tuning and additional training for a variety of downstream tasks

- The pretrained model will then be handed off for additional

fine-tuning on a variety of downstream tasks

- Scientific discovery

- Accelerate scientific tasks

- Digital twins

- Inverse design

- Code optimization

- Accelerated simulations

- Autonomous experiments

- Co-design

- Becoming increasingly clear that LLMs have the potential to

drastically accelerate computational science

- We’ve seen this already for {GenSLMs, Weather / Climate / Earth Systems Modeling, Particle Physics, etc.}

🧰 AuroraGPT: Toolbox

- Datasets and data pipelines (how do we deal with scientific data?)

- Software infrastructure and workflows (scalable, robust, extensible)

- Evaluation of state-of-the-art LLM Models (how do they perform on scientific tasks?)

[!NOTE]

🚂 Training

argonne-lcf/Megatron-DeepSpeed

Large Model Training: Any Scale, Any Acclerator

[!IMPORTANT]

🏃♂️ Running

argonne-lcf/inference-endpoints

Inference endpoints for LLMs, hosted @ ALCF

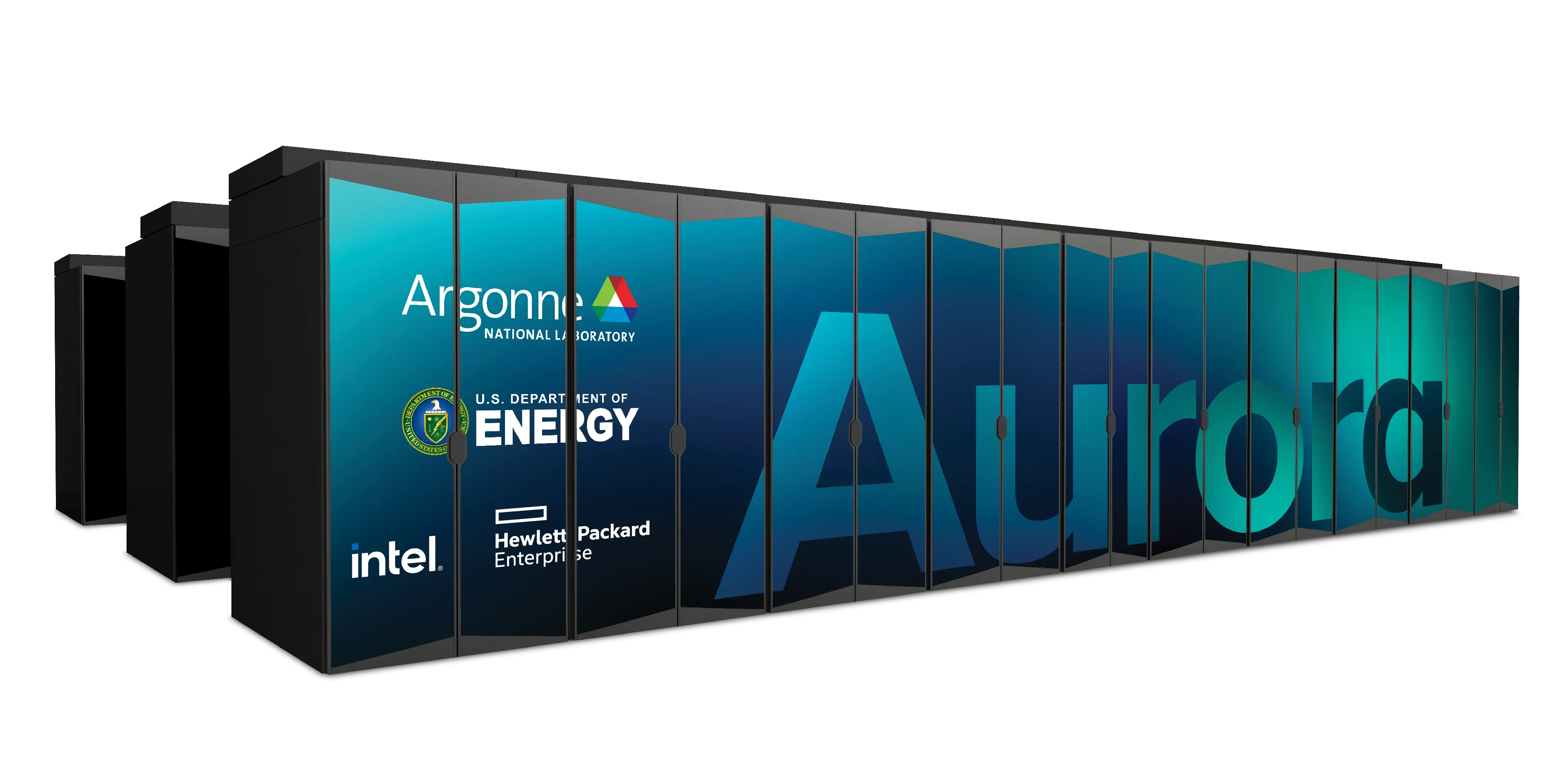

🌌 Aurora

Table 1: Aurora Specs

| Racks | 166 |

| Nodes | 10,624 |

| CPUs | 21,248 |

| GPUs | 63,744 |

| NICs | 84,992 |

| HBM | 8 PB |

| DDR5c | 10 PB |

Figure 3: Aurora1: Fact Sheet.

🤝 Teams

- Planning

- Data

- Aggregate existing data and generate new (synthetic) data

-

Models / Training

2 - Pre-train a series of models on publicly available data - Post-Training

- Fine-tuning, alignment, reinforcement learning

- Evaluation

- Skills, trustworthiness, safety, robustness, privacy, machine ethics

- Inference

- Model serving, API development / public-facing web services

- Distribution

- Licensing, generating and distributing artifacts for public consumption

- Communication

generating curating / aggregating cleaning / understanding new data for training including: MCQ’s + scientific narratives new scientific data modalities (gene sequences, geospatial data, …)

🍎 Training LLMs

- Want to minimize cost of training

Maximize throughput(?)- Data parallelism takes us only so far (McCandlish et al. 2018)…

- Possible directions:

- Large batch training (?)

- new (second order?) optimizers

- Better tokenization schemes (no tokenizers ?)

- Better data (?)

- Alternative architecture(s) (?)

- Diffusion / flow-matching

- Sub-quadratic attention (state space models, …)

- Large batch training (?)

🎯 Goals

We need our implementation3 to be:

- 💯 Correct

- Consistent across systems

- Requires being able to run the same code on multiple different machines

- Independent of hardware and communication library (e.g.

CUDA,ROCm,XPU,CPU,MPS, …)

- 🚀 Scalable

- Performant across thousands of GPUs

- Highly configurable and extensible

- Parallelizable across (tensor, pipeline, sequence) dimension(s)

- Robust against hardware, network, filesystem, transient failures4

🏋️ Challenges: In Practice

This is incredibly difficult in practice, due in part to:

- Brand new {hardware, architecture, software}

- Lack of native support in existing frameworks (though getting better!)

- General system stability

+10k Nodes +100k XPUs- network performance

- file system stability (impacted by other users !)

- many unexpected difficulties occur at increasingly large scales

- Combinatorial explosion of possible configurations and experiments

- hyperparameters, architectures, tokenizers, learning rates, …

💾 Training: 2T Tokens

- To train a fixed model on trillions of tokens requires:

- Aggregating data from multiple different corpora

(e.g. ArXiv, Reddit, StackExchange, GitHub, Wikipedia, etc.) - Sampling each training batch according to a fixed distribution across corpora

- Building indices that map batches of tokens into these files (indexing)

- Aggregating data from multiple different corpora

The original implementation was slow:

- Designed to run serially on a single device

- Major bottleneck when debugging data pipeline at scale

🍹 Blending Data, Efficiently

- 🐢 Original implementation:

- Slow (serial, single device)

- ~ 1 hr/2T tokens

- 🐇 New implementation:

- Fast! (distributed, asynchronous)

- ~ 2 min/2T tokens (30x faster !!)

Figure 4: Time spent preparing 2T tokens

📉 Loss Curve: Training AuroraGPT-7B on 2T Tokens

✨ Features

- 🕸️ Parallelism:

- data, tensor, pipeline, sequence, …

- ♻️ Checkpoint Converters:

- Megatron ⇄ 🤗 HF ⇄ ZeRO ⇄ Universal

- 🔀 DeepSpeed Integration:

- ZeRO Offloading

- Activation checkpointing

- AutoTP (WIP)

- ability to leverage features from DeepSpeed community

✨ Features (even more!)

- 🧗 Optimizers5:

- Support for many different optimizers:

- Distributed Shampoo, Muon, Adopt, Sophia, Lamb, GaLORE, ScheduleFree, …

- See full list

- Large batch training

- Support for many different optimizers:

- 📊 Experiment Tracking:

- Automatic experiment and metric tracking with Weights & Biases

🔭 LLMs for Science source (@tenderizzation) ChatGPT: explain this image

🤔 Evaluating Models on Scientific Applications

- What to measure?

- Knowledge Extraction, Retrieval, Distillation, Synthesis: LLM is provided a question or instruction and a truthful answer is expected

- Text Grounded: Answers are expected to be fully grounded on peer-reviewed references to support responses

- Reasoning: LLMs are expected to solve deductive (prove a theory or hypothesis from formal logic and observations), inductive (validate / explain observations from theories) problems

- Creativity: A creative answer is expected from a question or

instruction

- thoughtful dialogue, coding, etc.

⚖️ Evaluating FM Skills for Science: Criteria

- Criteria for all of the above:

- Correctness of facts

- Accuracy of solutions and inferences

- Reliability consistently good in quality or performance

- Speed how fast to produce a response

- # shots how many examples are needed for good quality

- Extent of prompt engineering

🧬 MProt-DPO: Scaling Results

Figure 5: Scaling results for 3.5B model across ~38,400 GPUs

-

~ 4 EFLOPS @ Aurora

-

38,400 XPUs

= 3200 [node] x 12 [XPU / node] -

🔔 Gordon Bell Finalist6:

🧬 MProt-DPO: Scaling Results

Figure 6: 3.5B model

Figure 7: 7B model

🚂 Loooooooooong Sequence Lengths

- Working with

Microsoft/DeepSpeed team to

enable longer sequence lengths (context windows) for LLMs

- See my blog post for additional details

Figure 8: Maximum (achievable) SEQ_LEN for both 25B and 33B models

(See: Song et al. (2023))

📓 References

- argonne-lcf /

Megatron-DeepSpeedFor the largest of large language models. - saforem2 /

ezpzDistributed training, ezpz. 🍋 - 📊 See my other slides at samforeman.me/talks:

❤️ Thank you!

[!NOTE]

🙏 Acknowledgements

This research used resources of the Argonne Leadership Computing Facility, which is a DOE Office of Science User Facility supported under Contract DE-AC02-06CH11357.

📑 Bibliography

- Refs:

- Wei et al. (2022)

- Animations from The Illustrated Transformer

Dharuman, Gautham, Kyle Hippe, Alexander Brace, et al. 2024. “MProt-DPO: Breaking the ExaFLOPS Barrier for Multimodal Protein Design Workflows with Direct Preference Optimization.” Proceedings of the International Conference for High Performance Computing, Networking, Storage, and Analysis (Atlanta, GA, USA), SC ’24. https://doi.org/10.1109/SC41406.2024.00013.

Hosseini, Ryien, Filippo Simini, Venkatram Vishwanath, Rebecca Willett, and Henry Hoffmann. 2025. “Quality Measures for Dynamic Graph Generative Models.” The Thirteenth International Conference on Learning Representations. https://openreview.net/forum?id=8bjspmAMBk.

McCandlish, Sam, Jared Kaplan, Dario Amodei, and OpenAI Dota Team. 2018. An Empirical Model of Large-Batch Training. https://arxiv.org/abs/1812.06162.

Song, Shuaiwen Leon, Bonnie Kruft, Minjia Zhang, et al. 2023. DeepSpeed4Science Initiative: Enabling Large-Scale Scientific Discovery Through Sophisticated AI System Technologies. https://arxiv.org/abs/2310.04610.

Wei, Jason, Yi Tay, Rishi Bommasani, et al. 2022. Emergent Abilities of Large Language Models. https://arxiv.org/abs/2206.07682.